A letter from our CEO

In the life science industry, one that has slowly and deliberately built processes, regulations, and standards to ensure clinical trial data integrity and patient safety, artificial intelligence represents a stark contrast. It offers speed that can feel both liberating and energizing, yet to the inherently cautious QA leader, it may also seem precipitous and unsettling.

The question isn’t whether to embrace AI. It’s about how to do so without compromising what has made our industry trustworthy. Our stance is clear: embrace AI, but with guardrails.

We are all in, but we believe in modernizing without forgetting why our standards exist in the first place. It's about embracing AI's potential while staying grounded in the human expertise and judgment that no algorithm can replace.

This means that humans must remain at the center of all that we do, and of all decisions that are made.

The opportunity is immense, as you will see from the collective viewpoint in this report, though it is not without some tension. The excitement exists directly alongside the uncertainty around how to move forward.

Let's dig into the insightful data we collected.

Paul Carter

Founder, President & CEO, Montrium

In a nutshell

An industry in thoughtful transition

The clinical research industry is standing at the edge of AI adoption in quality management —curious, cautiously optimistic, but hesitant due to regulatory uncertainty and lack of internal expertise.

47% of organizations have no AI use in clinical quality today

with another 35% only experimenting with pilots.

Regulatory uncertainty is the #1 barrier to adoption

cited by 32% of respondents—more than any other concern.

48.5% of organizations have no clear owner for AI governance

with most still evaluating how to govern AI.

Confidence is high (average 7/10) that AI can improve quality operations

but implementation is low.

QA professionals expect their roles to shift dramatically from execution to oversight

within 3-5 years

Critical thinking and validation oversight is seen as the most important skill for the AI era

by a landslide (59%)

What this means

We're witnessing a collective holding pattern. The industry believes in AI's potential but is waiting for someone else, namely, regulators, industry leaders, and peers, to move first.

The current state of AI adoption

Measured progress, not paralysis

AI adoption maturity

Let's start with the most revealing finding: 47% of organizations reported no AI use in clinical quality management today.

The gap between AI awareness and AI action

In the life science industry, one that has taken its due, intentional time to build processes, regulations, and standards that ensure the integrity of clinical trial data and patient safety, artificial Intelligence stands at the opposite end of the spectrum. It brings speed, which can feel alleviating and invigorating, but to the inherently cautious QA leader, it can also feel hasty and disconcerting.

47% with no current use

This doesn't necessarily signal fear of innovation, it reflects the reality that many organizations are still finding their footing, navigating uncertainty and building the foundational understanding, governance, and capabilities necessary to implement AI responsibly. Those companies with no current use of AI will need to eventually take the leap despite regulations still feeling nebulous, to avoid falling behind.

32% in early experimentation

These organizations are learning how AI works in their context, understanding its limitations, and identifying where it can genuinely add value versus where it's just technological novelty.

19% with active use of AI in 1-2 workflows

This is a great sign of moving forward, slowly. Implement, learn, iterate. These companies are leaning into the discomfort (and excitement) of something new, one step at a time.

2% Integrated/scaled across operations

This is significant, but it's supposed to be. Clinical quality isn't an industry where you pilot on Tuesday and scale on Wednesday. The gap to us, represents necessary validation, governance development, and organizational readiness building.

Our take

While nearly 32% of respondents are experimenting and 20% are actively using AI, only 1.5% have been able to scale its implementation. The jump from pilot to production is the real barrier. People are getting stuck here because there’s too much at stake to scale frivolously. So, while confidence is high (7/10), the reality of scaling remains the challenge, and something we expect will take time to overcome.

Where AI is being applied

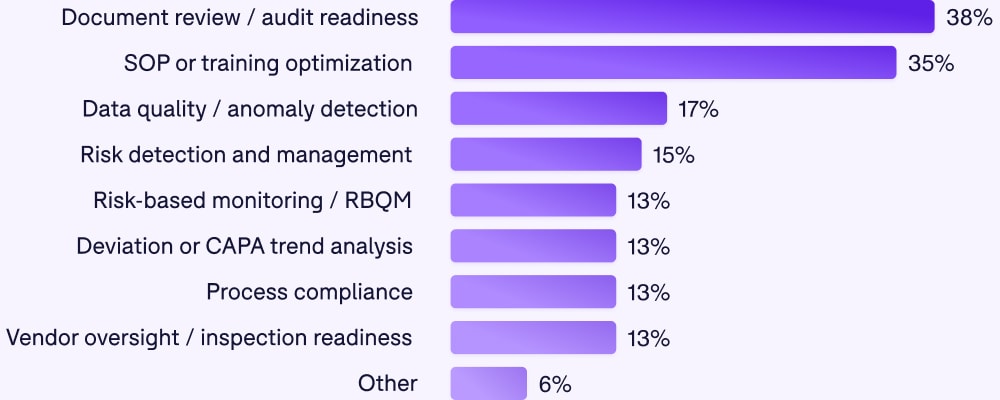

Among those who are using AI, here's where they're focusing:

What this means

These use cases reveal a pragmatic approach. Organizations aren't starting with the most complex, high-stakes applications. They're starting with high-volume, repeatable tasks (document review), pattern recognition (trend analysis, anomaly detection), and risk identification (RBQM).

These are areas where AI can add clear value without requiring perfect accuracy. A false positive in anomaly detection is frustrating. A false negative that directly impacts patient safety is catastrophic. The industry is correctly identifying where the risk/reward ratio makes sense.

The confidence-reality balance: why the gap makes sense

7/10 - Average confidence that AI can improve quality

1.5% - Organizations with scaled implementation

At first glance, this looks like a disconnect. If people believe in AI's potential, why aren't more organizations using it? But maybe we don’t need to see this as a disconnect. Instead, let’s consider it discernment.

Believing AI can improve quality (confidence) is different from believing we're ready to implement it responsibly (action). The gap represents healthy caution: organizations want to get this right, not just get it done.

This is the central tension in the report: the barriers to begin responsible and sustainable AI implementation is in large part out of our control.

Get the complete report in a streamlined format built for easy reading and sharing.

Get the PDF version.avif)

The Barriers

What’s holding organizations back

We asked respondents to identify their biggest barrier to AI adoption. The results were unambiguous.

Let’s dig into some of the data:

1. Regulatory uncertainty: a legitimate concern

This isn't about risk aversion disguised as regulatory concern. It's about the genuine challenge of implementing technology in a highly regulated environment where the rules are still being written.

Quality professionals are responsible for ensuring compliance. When regulatory guidance is evolving, ambiguous, or doesn't directly address their specific AI applications, they're rightfully concerned about:

- How to validate AI tools in ways that will satisfy future inspections

- What documentation is required to demonstrate AI oversight

- How to explain AI-informed decisions to auditors who may not understand the technology

This is professional responsibility, not resistance to innovation.

Our take

The solution isn't to ignore these concerns and move forward anyway. It's to engage proactively with regulators, document thoroughly, and share learnings across the industry so guidance can evolve based on real-world implementation.

.avif)

Regulators are hesitant because they're still learning and developing their own knowledge in this area. They've shared guiding principles, and planning to put regulations in place, but they're still essentially saying "we don't know, here's our thinking, do the best you can".

Shaun Hastings

Director of Quality Assurance, PHARMExcel

.avif)

What jumps out immediately is that the top two barriers are not technical. They’re uncertainty and capability. That alone tells us AI adoption is being slowed less by what AI can do, and more by whether organizations feel safe, competent, and defensible using it. AI adoption in regulated QA environments is being blocked not by technology, but by confidence.

Donatella Ballerini

Head of eTMF Services, Montrium

.avif)

Fear of the unknown. There is not full confidence that the information from AI is correct and also that individuals will have a review/validation process to verify information.

Dawn Niccum

Executive Vice President, QA, inSeption Group

2. Lack of internal expertise: the skills gap is real

The second-biggest barrier was knowledge. Organizations don't know how to implement AI in quality management, even when they want to.

This expertise gap shows up in multiple ways:

- Not knowing how to validate AI tools

- Not understanding how to interpret AI outputs

- Not knowing what questions to ask vendors

- Not having staff who can bridge quality and data science

The traditional QA professional was trained in GCP, SOPs, and audit readiness, not machine learning models, training data bias, or algorithmic validation.

There is HUGE opportunity here, but to unlock it, organizations will be required to train employees.

Our take

The solution is investment in capability building: training programs, hiring strategies, and partnerships that bring AI expertise into quality teams. This takes time, but it's necessary time. We predict a lot emphasis on this area in the coming years.

3. Cultural resistance: trust issues

Nearly 20% of respondents cited cultural resistance or trust concerns the top barrier to implementing AI.

When quality professionals talk about "trust," they're often referring to:

- Trust that AI won't miss critical issues

- Trust that AI outputs are explainable and defensible

- Trust that the AI tools won’t hallucinate

- Trust that colleagues and leadership will accept AI-informed decisions

The "black box" problem (in reference to a system whose internal workings are a mystery to its users) isn't abstract in quality management. When an AI flags a deviation or recommends a site for monitoring, someone has to stake their professional credibility on that recommendation.

Overcoming cultural resistance means:

- Involving quality professionals early in AI selection and implementation

- Providing hands-on experience with AI tools in low-stakes environments

- Demonstrating value through pilots with clear success metrics

- Maintaining human oversight so professionals see AI as augmenting, not replacing, their expertise

- Sharing failures as well as successes so teams learn what to watch for

Organizations that invest in change management and stakeholder engagement will build trust. Those that mandate AI adoption without addressing concerns will face justified resistance.

Governance

The North Star for AI in clinical trials

Half of organizations have no clear owner for AI governance, making the ultimate question not IF we should adopt AI, but WHO should lead it. So - who is responsible?

Governance ownership, at a glance

The data shows a split between:

- IT/Data Science (29%)

- Cross-functional teams (12%).

- Quality/QA (11%)

There’s no one right answer, but it doesn’t feel responsible to position one department as entirely responsible.

IT/Data Science understands the technology but may not fully grasp the regulatory implications. Quality/QA understands compliance but may lack technical AI expertise. Cross-functional teams can bridge both but often struggle with accountability and decision-making speed.

There will likely be a learning curve here, and we may even see dedicated AI teams emerge within organizations.

The 64% "beginning to evaluate" is encouraging. It means most organizations surveyed recognize governance is necessary and are actively working on it. The 9% with governance in place are valuable early movers. Their frameworks, successes and failures alike will inform industry best practices. The 27% "not at all prepared" have work to do. But even here, awareness that they're unprepared is better than proceeding too far without governance.

Our take

The solution is investment in capability building: training programs, hiring strategies, and partnerships that bring AI expertise into quality teams. This takes time, but it's necessary time. We predict a lot emphasis on this area in the coming years.

.avif)

50% with no clear owner is not a maturity issue. It’s a governance avoidance signal. Organizations are experimenting with AI, but many are quietly hoping someone else—regulators, IT, vendors, or “future policy”—will eventually tell them who’s accountable. That works… until AI moves from pilot to process.

Donatella Ballerini

Head of eTMF Services, Montrium

.avif)

Companies and functions don't know where to start with AI. The nearly 50% that do not have a clear owner is an indication that data management and data quality is an afterthought. The 29% that responded IT/Data science indicates that there is a Chief Data Officer (or similar) where all data, AI and automation decisions are being made and most likely than not are the ultimate drivers to enable AI throughout the organization.

Neel Patel

Director of Technology, Espita Life Science

What governance should address:

- How AI tools will be validated before use

- What documentation is required for AI-enabled processes

- How AI performance will be monitored over time

- What level of human oversight is required for different AI applications

- How AI decisions will be explained to auditors and regulators

- What happens when AI outputs are questionable or wrong

- How vendors will be assessed and managed for AI tools

- How AI performance will be monitored over time

AI Philosophy

Four thoughtful approaches to AI

Our read on this

Cautious Experimentation: These organizations are likely piloting AI in controlled environments, learning its capabilities and limitations, and building internal expertise before scaling.

We like this approach because it follows our “ready, set,go slow– but go” mentality.

Strategic Investment: These organizations are deliberately building AI capability (training people, establishing governance, modernizing systems) before widespread implementation.

cited by 32% of respondents—more than any other concern.

Wait-and-See: These organizations are explicitly following others. There's wisdom in learning from early adopters, but there'sriskin waiting too long, such as falling behind the competition.The key is knowing when to stop waiting and start acting, even if slowly.

Transformative: These organizations see AI as fundamental to quality's future. They'll be the early case studies, both successes and cautionary tales. The industry needs these pioneers, but not everyone should be a pioneer.

The right philosophy depends on your organization.

There's no single correct approach. The right philosophy depends on:

What matters is that your philosophy is intentional, not accidental. Choose your approach

deliberately, resource it appropriately, and be honest about what it requires.

Looking ahead

How AI will shift QA roles in 3-5 years

We asked an open-ended question: "In your own words, how do you expect AI will change the role of QA professionals over the next 3–5 years?" The responses were remarkably consistent.

What Quality professionals said

“Over the next few years, I think AI will take a lot of the repetitive checking off QA's plate. That frees us up to do the higher-value work: using data to spot real risks, shaping smarter processes, and making sure the AI itself is used in a compliant, ethical way that people can trust.” 🤝

“Instead of compiling & summarizing data, human tasks will shift to thoughtfully and purposefully analyzing the data summaries/compilations.” 📊

“AI will actively work side by side with QA professionals to help maximize and produce better outcomes in compliance.” ✅

“I believe that the QA professionals will become better suited experts and industry leaders without the mundane activities they are doing today for a percentage of their role.” 📚

“The function will evolve from operational QA to strategic, analytical, and decision-support QA.” 🧠

“It would reduce the timeline for data review, draft and review documents and overall make quality processes efficient.” ⏱️

What Quality professionals said

Five themes emerged repeatedly:

1.

Shift from execution to oversight: Less doing, more reviewing and validating

2.

Reduction in repetitive, mundane tasks: AI handles volume, humans handle judgment

3.

More strategic, analytical work: Focus on interpretation, not data gathering

4.

Risk-based approach becomes feasible: AI enables real-time risk identification at scale

5.

Human expertise remains critical: For context, oversight, and final decision-making

Our take

Quality professionals are not afraid AI will replace them. They're anticipating it will elevate them and evolve

their roles.

QA professionals need to be exceptional at the things AI can't do:

- Critical thinking about risk

- Understanding regulatory nuance and context

- Build governance frameworks that balance innovation with compliance

- Explaining and defending AI-informed decisions

- Identifying when AI outputs are wrong or misleading

As one might expect, not every QA professional will make this transition easily. Organizations that succeed with AI won't just implement new tools, they will upskill their people and provide them with the right training and support to successfully expand their mindset and skills.

The skills that matter

What QA professionals will need to learn

Quality professionals intuitively understand that their value in an AI-enabled future is judgment—the distinctly human ability to assess context, evaluate reasonableness, and make decisions that balance multiple considerations.

- AI can flag anomalies, but humans decide which anomalies matter

- AI can identify trends, but humans interpret what those trends mean for the success of the clinical trial

- AI can generate reports, but humans validate whether those reports are accurate and defensible

Our take

The emphasis on "validation oversight" is particularly telling. Quality professionals aren't expecting to become data scientists. They're expecting to become AI validators, the people who ensure AI tools are fit for purpose, performing as expected, and producing trustworthy outputs. This is further reinforced by the fact that only 6% expect to be prompt engineering / model testing. They don't want to develop the AI, they want to oversee it.

Critical thinking is as much a skill to hone as AI comprehension. It’s interesting: to properly utilize and succeed at applying artificial intelligence, we must develop the most real and human of all our qualities: the faculty of critical thought and human judgment.

.avif)

Critical thinking should dominate all that we do in both QA and the functions we support. AI has tremendous potential; however, it will not eliminate the need for a person to review the outputs and ensure that they make sense.

Dawn Niccum

Executive Vice President, QA, inSeption Group

.avif)

Critical thinking is super important as newer AI technologies can look really reliable to start, but the ways they fail aren't the same as traditional computer systems. Leaders in this space need to recognize that, be able to see the opportunity through the noise and come up with frameworks that allow these systems to flourish.

Aaron Grant

Head of Innovation, Just In Time GCP

.avif)

Critical thinking remains the most essential skill for QA professionals working with computerized systems, including those that use artificial intelligence. This skill is crucial not only for determining the most efficient approach to system validation but also for assessing the risks and benefits associated with implementing such systems.

Frank Henrichmann

Sr. Exec. Consultant, QFINITY

What the industry really needs

The guidance gap

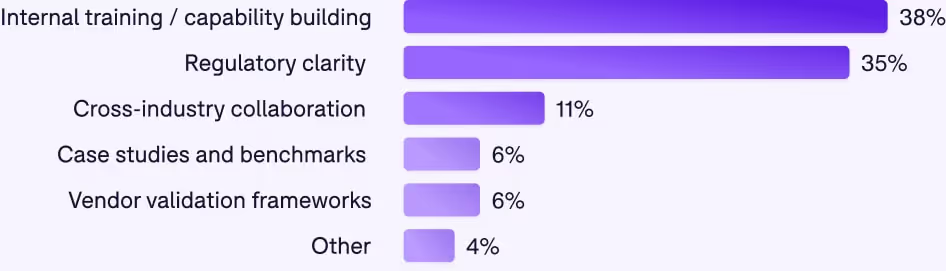

Internal training: the top need, where we have the most control

38% want internal training and capability building, and this is the most actionable need.

Unlike regulatory clarity (which requires external stakeholders), organizations can address capability gaps immediately.

What quality teams need to learn:

- Conceptual understanding of how AI/ML models work (not deep technical expertise, but solid comprehension)

- Validation approaches for AI tools and how they differ from traditional software validation

- Interpretation skills for assessing AI outputs and identifying potential errors

- Documentation practices for AI-enabled processes that will satisfy auditors

- Communication strategies for explaining AI to stakeholders, leadership, and regulators

This training doesn't require waiting for regulatory clarity or industry consensus. Organizations can build AI literacy now, positioning themselves to implement effectively when ready.

Regulatory clarity: A top need

35% want regulatory clarity, and this is entirely reasonable.

Quality professionals operate in a regulated environment. They need to understand how AI fits within existing regulatory frameworks and what documentation/validation will be expected.

The challenge: Regulators typically respond to industry practice rather than prescribing it in advance. Detailed guidanceemergesfrom real-world implementations, inspections, and collaborative dialogue.

What this means practically:

The solution requires partnership:

- The industry must implement thoughtfully and engage proactively with regulators

- Regulators must provide frameworks that enable innovation while protecting patients

- Industry associations must facilitate dialogue between implementers and regulators

This is how regulatory clarity emerges, through careful implementation and collaborative learning.

.avif)

.avif)

.avif)

.webp)

.webp)

.webp)